In the past few years, artificial intelligence has trickled into the entertainment industry, distinguishing itself in films, television and music. Despite its rapid advancements, AI remains an enigma to many, with minimal legislation to regulate the technology. In recent days, it’s been a part of the conversation surrounding Hollywood’s writer’s strike, the first strike in 15 years.

Given the unlimited avenues of its use, actors and musicians are beginning to speak out on the use of artificial intelligence in conjunction with their name and likeness. Some are selling their rights to AI companies, while others are making provisions in contracts to prevent them from being manipulated by its technology.

“AI is not one thing that is either good or bad,” Tobias Queisser, founder and CEO of business intelligence platform Cinelytic, told Fox News Digital.

“AI’s been around for a long time,” Liz Moody, senior partner and chair of New Media Practice at Granderson Des Rochers, LLP, added. “What I’m finding is that there is a lot of confusion right now and a lot of fear about what’s going to happen next [within the entertainment space].”

HOW THE NEW ‘INDIANA JONES’ FILM FEATURES A SUPER YOUNG HARRISON FORD

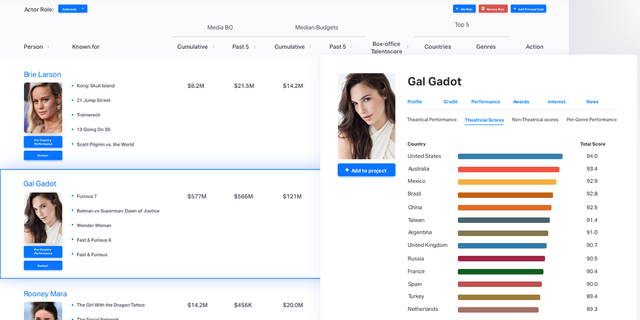

Business intelligence company Cinelytic licenses software and helps users forecast what will be most profitable in a film. (Cinelytic.com)

The level of interest in AI is different for actors like Keanu Reeves and Harrison Ford. Here’s a look at what stars have said about the use of artificial intelligence in the entertainment industry.

Harrison Ford

Harrison Ford is resuming his role in the fifth installment of “Indiana Jones.” (Jesse Grant/Getty Images for Disney)

In the upcoming film “Indiana Jones and the Dial of Destiny,” artificial intelligence is used to reimagine Harrison Ford’s face as if he were still 35 years old.

“They have this artificial intelligence program that can go through every foot of film that Lucasfilm owns,” Ford said of George Lucas’ production company on “The Late Show with Stephen Colbert.”

“I did a bunch of movies for them. They have all this footage, including film that wasn’t printed. So they can mine it from where the light is coming from, from the expression. I don’t know how they do it. But that’s my actual face,” Ford said of how he appears so young in a promotional still from the movie. “Then I put little dots on my face, and I say the words, and they make [it]. It’s fantastic.”

James Mangold, the director of the film, told Total Film magazine, “We had hundreds of hours of footage of [Ford] in close-ups, in mediums, in wides, in every kind of lighting — night and day.

“I could shoot Harrison on a Monday as, you know, a 79-year-old playing a 35-year-old, and I could see dailies by Wednesday with his head already replaced,” Mangold explained.

Through the use of AI, Harrison Ford appears in the new film as if he is 35 years old. (Disney/Lucasfilm)

The expedited process Mangold is referencing is what has drawn executives and producers to use other artificial intelligence tools within the entertainment industry, including Queisser’s Cinelytic technology, which includes a forecasting tool that predicts multiple revenue streams, including domestic and international box offices as well as non-theatrical release revenues including streaming.

“Whether it’s a studio or an independent film company, they use the platform themselves, so they get access to the tools and the data. And so they could then log in basically and say like, ‘Hey … which actors, directors, writers, producers are … valuable for my type of project?’ Yes, that’s a question that the system can answer,” Queisser, whose clients include Warner Bros., explains of the cloud-based, business intelligence platform.

“It can put together a list of potential, you know, actors, writers, directors very quickly just by using the system,” he added. “And then there’s a forecasting tool where they themselves can then input their project. So I have a, whatever, $20 million production budget, thriller, that’s based on a book and I consider that director, that actor, that producer, and you can forecast it. And then you can change the actor, for example. Then you can rerun the forecasts, and it gives you a different forecast.”

A process that previously took “20, 30 hours” is now “20 minutes,” Queisser said.

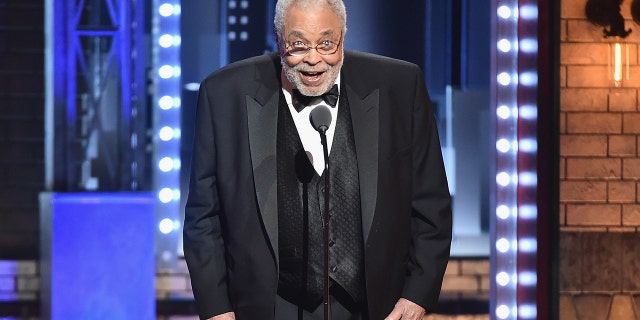

James Earl Jones

In 2022, it was announced James Earl Jones would be retiring from voicing Darth Vader. (Theo Wargo/Getty Images for Tony Awards Productions)

James Earl Jones, 92, has long voiced Darth Vader in the “Star Wars” franchise, another entity under Lucasfilm. In 2022, Jones announced he was retiring from future projects, although his voice would remain.

Ukrainian startup company Respeecher partnered with Lucasfilm to recreate Jones’ voice from 45 years ago for the new television series “Obi-Wan Kenobi.”

Respeecher is a voice-cloning software that uses archival recordings and AI algorithms to formulate new dialogue while still using the voice of a previous performer.

James Earl Jones still consults with creatives over the direction of his character. (20th Century Studios per Everett Collection)

Matthew Wood of Lucasfilm told Vanity Fair Jones intended to move on from his infamous role.

“He had mentioned he was looking into winding down this particular character. … So how do we move forward?” Wood said.

Wood introduced the famed actor to the concept of Respeecher’s work, which led to Jones signing off on usage of his archived voice recordings. He is consulted with for character direction.

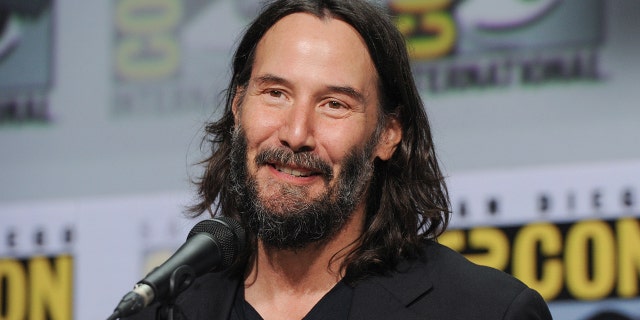

Keanu Reeves

Keanu Reeves isn’t sold on artificial intelligence despite being in “The Matrix.” (Albert L. Ortega)

CLICK HERE TO SIGN UP FOR THE ENTERTAINMENT NEWSLETTER

Reeves is a part of what many consider to be the ultimate franchise foreshadowing AI with “The Matrix,” but he is not as receptive to new technology as Ford or Jones.

The “John Wick” actor has a clause in his contracts that prohibits digital manipulation without his consent.

“I don’t mind if someone takes a blink out during an edit,” Reeves told Wired. “But, early on, in the early 2000s, or it might have been the ’90s, I had a performance changed. They added a tear to my face, and I was just like, ‘Huh?!’ It was like, ‘I don’t even have to be here.’

“What’s frustrating about that is you lose your agency,” Reeves added of deepfakes. “When you give a performance in a film, you know you’re going to be edited, but you’re participating in that. If you go into deepfake land, it has none of your points of view.

“That’s scary. It’s going to be interesting to see how humans deal with these technologies. They’re having such cultural, sociological impacts and the species is being studied. There’s so much ‘data’ on behaviors now.”

Keanu Reeves has a stipulation in his contract that says he cannot be manipulated without his consent. (Ronald Siemoneit/Sygma/Sygma)

A deepfake is defined by Oxford Dictionary as “a video of a person in which their face or body has been digitally altered so that they appear to be someone else, typically used maliciously or to spread false information.”

“People are growing up with these tools. We’re listening to music already that’s made by AI in the style of Nirvana. There’s NFT digital art,” Reeves added. “It’s cool, like, ‘Look what the cute machines can make!’ But there’s a corporatocracy behind it that’s looking to control those things. Culturally, socially, we’re gonna be confronted by the value of real, or the non-value. And then what’s going to be pushed on us? What’s going to be presented to us?”

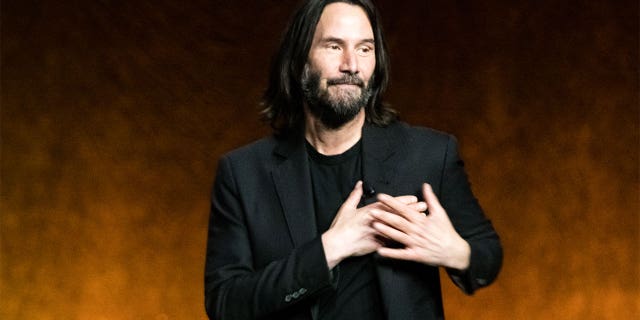

Jonathan Steinsapir, partner at Kinsella Weitzman Iser Kump Holley LLP, tells Fox News Digital there are two predominant reasons a celebrity might add a clause such as Reeves’ in a contract.

“As to why you would want to prevent it, I think it’s just one more, one more protection. If you have a right to prevent use of your image or use of your, you know, voice. … If you have a right to prevent that, that means that if someone wants to do it, they have to pay you to do it.

“So the reason to do it would be … economics so that you could exploit that in the future. And the second reason, obviously, is because you want to control. … What you put out there is used as a moral, you know, as a moral matter.”

Lawyers in the industry believe its entirely plausible entertainers start amending contracts to have provisions about AI use, similar to Keanu Reeves. (Greg Doherty)

KEANU REEVES REVEALS WHY HE RETURNED TO ‘THE MATRIX’ FRANCHISE: IT ‘RESONATED WITH ME’

Despite his involvement in the industry, Cinelytic’s Queisser agrees that some of what AI is capable of doing is problematic.

“I think it definitely needs regulation because it is a very powerful tool,” Queisser said. “And like every, you know, powerful tool that can impact society, it needs to be regulated. … Certainly, AI definitely needs to be regulated.

“It’s important to take a very nuanced and educated approach about what it means,” he added. “And then certain use cases … that have potential negative impacts should definitely be regulated. … I’m sure the legal side has to catch up. … Whether it’s regulation or whether it’s, you know, just attorney work and contracting. I’m sure things will change to [adapt] to that new environment.”

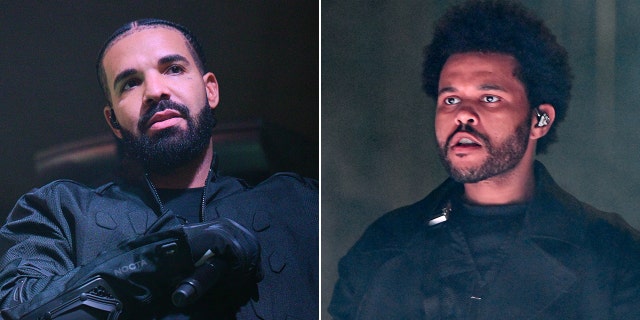

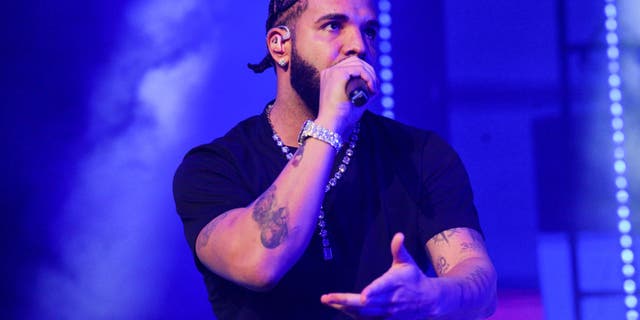

Drake and The Weeknd

A song using artificial intelligence was created by a TikTok user to sound like the voices of musicians Drake and The Weeknd. (Prince Williams/Paras Griffin)

Last month, musicians Drake and The Weeknd found themselves at the center of AI-manipulated music when a new song was created and released to social media using their vocals, or vocals eerily similar to both men’s unique and distinguishable sound.

A TikTok user known as “GhostWriter,” posted a song, “Heart on my Sleeve,” amassing millions of views. It later went public on streaming services, including Spotify and Apple Music, but was eventually taken down.

Universal Music Group, which represents Drake, released a statement about the viral song.

“UMG’s success has been, in part, due to embracing new technology and putting it to work for our artists – as we have been doing with our own innovation around AI for some time already,” a spokesperson for UMG told Fox News Digital.

“With that said, however, the training of generative AI using our artists’ music (which represents both a breach of our agreements and a violation of copyright law), as well as the availability of infringing content created with generative AI on DSPs, begs the question as to which side of history all stakeholders in the music ecosystem want to be on: the side of artists, fans and human creative expression or on the side of deep fakes, fraud and denying artists their due compensation.

FROM FAKE DRAKE TO AI-GENERATED EMINEM TRACKS: CAN MUSICIANS COPYRIGHT STYLE?

Drake previously voiced his issues with AI-generated music. (Prince Williams/Wireimage)

“These instances demonstrate why platforms have a fundamental legal and ethical responsibility to prevent the use of their services in ways that harm artists,” the UMG spokesperson added. “We’re encouraged by the engagement of our platform partners on these issues – as they recognize they need to be part of the solution.”

Drake also separately voiced his frustration with AI manipulation after it was able to create what sounded like Drake rapping a cover to fellow rapper Ice Spice’s song “Munch.”

“This is the final straw AI,” Drake wrote to his Instagram in April.

Grimes

Grimes revealed she would be open to her voice being used in AI-created tracks as long as she was paid 50% royalties. (Taylor Hill)

CLICK HERE TO GET THE FOX NEWS APP

Following the controversy surrounding the artificial song involving Drake and The Weeknd, fellow musician Grimes, who previously dated Twitter CEO Elon Musk, shared her views on potential royalties earned through AI-generated music.

“I’ll split 50% on any successful AI generated song that uses my voice,” she tweeted, sharing an article addressing the fake song scandal. “Same deal as I would with any artist I collab with. Feel free to use my voice without penalty. I have no label and no legal bindings.

“I think it’s cool to be fused w a machine and I like the idea of open sourcing all art and killing copyright,” she said in a separate tweet.